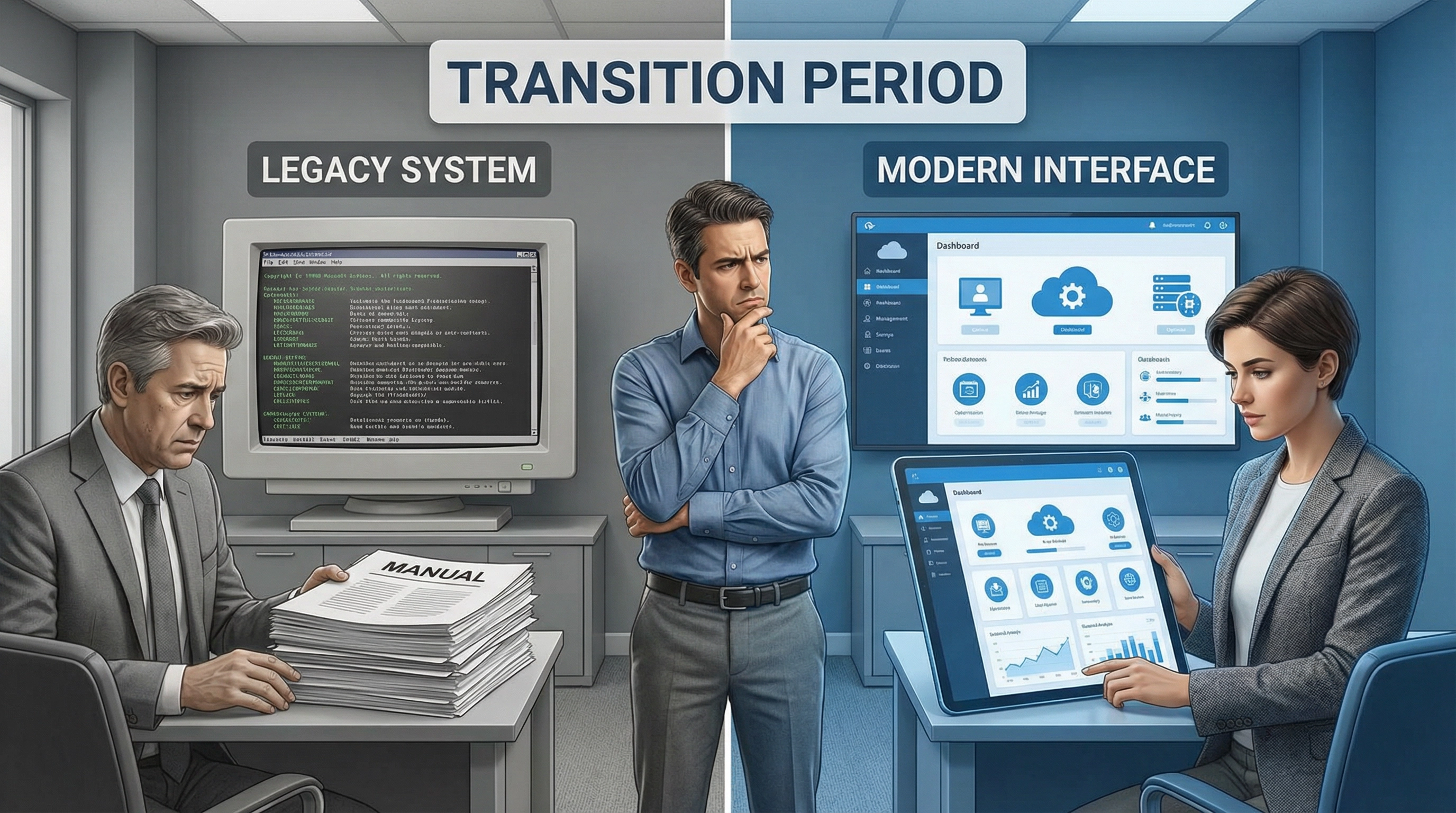

The migration was supposed to take a week. What actually happened was a month of operating in two systems simultaneously, with nobody quite sure which one held the current version of anything.

The migration was supposed to take a week. Clean cutover, minimal disruption, everyone trained and ready. That's what the timeline said. What actually happened was a month of operating in two systems simultaneously, with nobody quite sure which one held the current version of anything.

We'd committed to the new platform. Paid for it. Set it up. Migrated most of the data. But "most" isn't "all," and the gap between those two states turned out to be wider than anyone anticipated. Some records didn't transfer cleanly. Some workflows didn't map directly. Some team members kept defaulting to the old system because it was familiar and the new one still felt foreign.

The plan had been to flip a switch. Old system off, new system on. But plans assume clean boundaries, and real work doesn't have clean boundaries. There were ongoing projects that had started in the old system. There were integrations that still pointed to the old database. There were habits that took longer to break than anyone expected.

So we ended up in this strange in-between state. Officially using the new system, but constantly checking the old one to make sure nothing was missed. Entering data twice in some cases, just to be safe. Spending more time managing the transition than actually getting work done. It was exhausting in a way that's hard to explain to anyone who hasn't lived through it.

The worst part wasn't the technical challenges. Those were solvable, even if they took longer than planned. The worst part was the constant low-level uncertainty. Is this the right place to look for that information? Did I update the correct system? Is everyone else seeing what I'm seeing? Small questions, but they added up. Every task required an extra layer of decision-making that hadn't been there before.

People started developing workarounds. Keeping personal notes about where different types of information lived. Creating their own tracking systems to bridge the gap between the two platforms. These workarounds helped in the short term but made the overall situation more complex. Now we had the old system, the new system, and a dozen individual systems that people had cobbled together to make sense of the transition.

Nobody wanted to admit how messy it had become. There was pressure to declare victory, to say the migration was complete and move on. But declaring something complete doesn't make it complete. The confusion persisted, even as we stopped talking about it openly. It just became part of the background noise of getting work done.

Looking back, I can see where we went wrong. We treated the migration as a technical project when it was really a behavioral one. The technical parts—moving data, configuring settings, setting up integrations—those were straightforward. The behavioral parts—changing habits, building new mental models, trusting the new system—those took much longer and couldn't be rushed.

We also underestimated how much institutional knowledge was embedded in the old system. Not just data, but the informal practices that had developed around it. The shortcuts people knew. The quirks they'd learned to work around. The unwritten rules about how things were organized. All of that had to be rebuilt in the new system, and that rebuilding happened slowly, through trial and error.

The new system was objectively better in many ways. More features, better interface, stronger integrations. But "better" doesn't mean "immediately usable." There's a learning curve, and that curve is steeper when you're trying to maintain productivity while climbing it. You can't just pause all work for a month while everyone gets comfortable with the new tool. So you end up doing both—learning and working—and neither gets done as well as it should.

The transition period also revealed dependencies we hadn't mapped. Process A relied on data from Process B, which pulled from Process C. In the old system, those connections were established and reliable. In the new system, they had to be recreated, and sometimes the connections didn't work the same way. So processes that used to flow smoothly now required manual intervention, at least temporarily.

There were moments when it felt like we'd made a mistake. Not in choosing the new system, but in attempting the migration at all. The old system had problems, but at least they were familiar problems. The new system had different problems, and we didn't yet know how to solve them. That uncertainty was harder to deal with than the known limitations we'd been working around for years.

Gradually, things stabilized. Not through any single breakthrough, but through accumulated small adjustments. Someone figured out a better way to handle a particular workflow. Someone else discovered a feature that solved a recurring problem. The team started defaulting to the new system without consciously deciding to. The old system became the backup rather than the primary reference.

But it took longer than a week. Longer than a month, really. The official migration might have been complete, but the practical migration—the point where everyone felt comfortable and confident—took closer to three months. And even then, there were occasional moments of confusion when someone would ask "wait, where does this live now?" and we'd have to think about it.

The experience changed how I think about tool transitions. They're not events; they're processes. And processes take time, especially when they involve changing how people work rather than just changing what tools they use. You can't shortcut that time without creating problems that will surface later.

If I were planning a similar migration now, I'd build in more overlap time. Not because the technical migration needs it, but because people need it. They need time to develop confidence in the new system before letting go of the old one. They need permission to be uncertain, to ask basic questions, to move slowly. Rushing that creates stress and mistakes.

I'd also be more honest about what "complete" means. The migration is complete when the data is moved and the old system is shut down. But the transition is complete when people stop thinking about which system to use and just use the new one automatically. Those are different milestones, and conflating them creates false expectations.

For teams considering similar transitions, especially when [adopting new marketing tools](/use-cases/marketing-teams) or moving between platforms, the advice isn't to avoid migrations. Sometimes they're necessary. But go in with realistic expectations about the messy middle period. It's not a sign of failure. It's a normal part of the process. The question isn't whether you'll experience confusion and friction, but how you'll manage it when it happens.

The month between the old way and the new way was uncomfortable. But it was also necessary. You can't jump directly from one stable state to another without passing through instability. The key is recognizing that instability as temporary rather than permanent, and giving people the support and time they need to work through it.

We're fully on the new system now. The old one is decommissioned. Most people have forgotten how chaotic the transition period was. But I haven't. It taught me that changing tools is the easy part. Changing how people work with those tools is where the real challenge lives. And that challenge doesn't fit neatly into a one-week timeline, no matter how well you plan.