Vendor demos follow a predictable pattern. The interface looks polished. The workflow appears seamless. Then implementation begins, and the gaps between demo and reality become apparent.

Vendor demos follow a predictable pattern. The interface looks polished. The workflow appears seamless. Questions get answered with confidence. By the end of the session, the tool seems like an obvious fit. Then implementation begins, and the gaps between demo and reality become apparent.

The problem isn't that vendors misrepresent their products. Most demos accurately show what the tool can do. What they don't show is how the tool will function in your specific environment, with your data, your workflows, your constraints. The demo optimizes for showing capability. Evaluation needs to optimize for predicting actual performance.

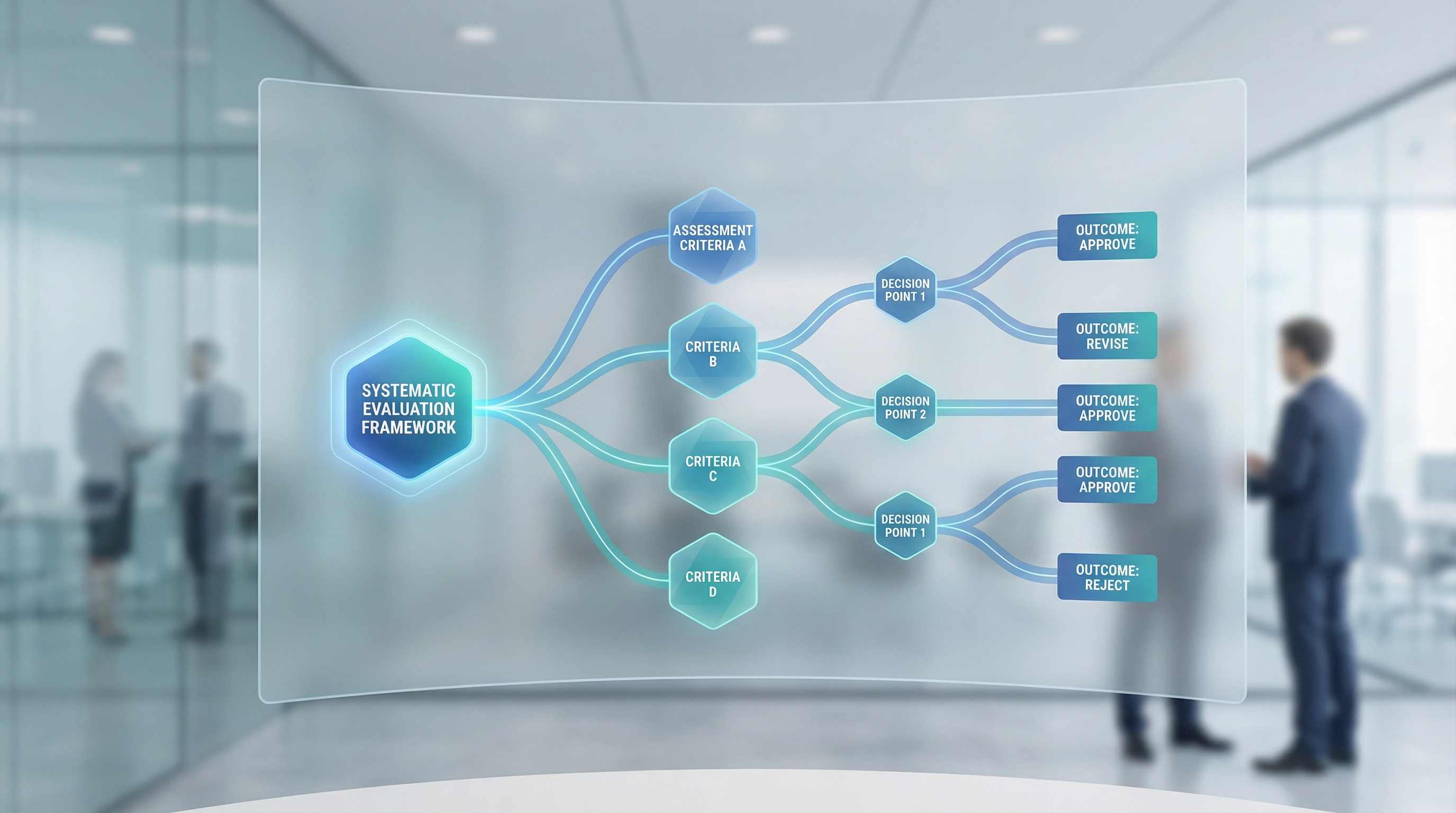

Over years of helping organizations select and implement SaaS tools, I've developed an evaluation framework that goes beyond feature checklists and vendor presentations. It's not complicated, but it requires more work than most selection processes invest. That additional work consistently predicts implementation success more accurately than traditional approaches.

The framework rests on a premise that sounds obvious but gets ignored regularly: the goal of evaluation isn't to find the best tool in abstract terms. It's to find the tool most likely to succeed in your specific context. Those are different questions with different answers.

Start with workflow mapping, not feature lists. Before looking at any tools, document how work actually happens in your organization. Not how it's supposed to happen according to the process manual, but how it happens in practice. Who does what? What information do they need? Where does that information come from? What decisions get made along the way? What happens when things go wrong?

This mapping reveals patterns that feature lists miss. You might discover that a process you thought was linear actually involves multiple feedback loops. Or that information gets entered in one system and then manually transferred to another because the two systems don't integrate well. Or that certain steps only happen during specific conditions that occur irregularly.

These patterns determine which tools will fit and which won't. A tool designed for linear workflows will struggle with processes that require frequent backtracking. A tool that assumes clean data will fail if your data quality is inconsistent. A tool built for regular, predictable tasks will create friction when applied to irregular, exception-heavy work.

One organization I worked with mapped their customer onboarding process and discovered it had seventeen decision points where the workflow could branch in different directions depending on customer type, contract terms, and implementation complexity. The tool they'd been considering handled linear onboarding well but couldn't accommodate that level of conditional logic without extensive custom development. The workflow mapping revealed this mismatch before they'd committed to the purchase.

Once you understand your workflows, identify constraint categories. Every organization has constraints that limit which tools will work. These constraints fall into several categories, and being explicit about them helps filter options effectively.

Technical constraints include existing systems that need to integrate, data formats that need to be supported, security requirements that must be met, and infrastructure limitations that can't be changed quickly. A tool might be excellent in isolation but unusable if it can't connect to your existing systems or meet your security standards.

Organizational constraints include approval processes, budget cycles, procurement requirements, and vendor management policies. A tool that requires annual payment might not work if your budget only allows quarterly commitments. A tool from a small vendor might not pass your procurement review even if it's technically superior.

Human constraints include available expertise, training capacity, change management bandwidth, and ongoing support resources. A tool that requires specialized technical knowledge might not work if you don't have that expertise and can't hire it. A tool that needs constant configuration might not work if nobody has time to manage it.

Temporal constraints include implementation timelines, seasonal workflow variations, and planned organizational changes. A tool that takes six months to implement might not work if you need something functional in two months. A tool that works well for steady-state operations might not work if your workload varies dramatically by season.

Being explicit about these constraints doesn't mean accepting limitations passively. It means being realistic about what can change and what can't, at least in the timeframe that matters for this decision. Some constraints are negotiable. Many aren't. Pretending they don't exist doesn't make them go away; it just delays discovering them until after you've committed to a tool that won't work.

With workflows mapped and constraints identified, define success criteria. This sounds basic, but most evaluation processes skip it or do it superficially. Success criteria need to be specific enough to be measurable and realistic enough to be achievable.

"Improve productivity" isn't a success criterion. "Reduce time spent on monthly reporting from eight hours to three hours" is a success criterion. "Better customer data" isn't a success criterion. "Increase customer record completeness from sixty percent to ninety percent within three months" is a success criterion.

Success criteria should distinguish between must-have outcomes and nice-to-have outcomes. Must-have outcomes are the minimum required to justify the investment. Nice-to-have outcomes are additional benefits that would be valuable but aren't essential. This distinction matters when evaluating trade-offs. A tool that delivers all must-have outcomes but few nice-to-have outcomes might be better than a tool that delivers some of each but doesn't fully achieve any must-haves.

Success criteria should also account for different stakeholder perspectives. The criteria that matter to end users often differ from the criteria that matter to managers or IT teams. A tool might score well on management criteria—cost, reporting capabilities, compliance features—while scoring poorly on user criteria—ease of use, speed, flexibility. Both perspectives matter, but they need to be weighted appropriately. A tool that management loves but users avoid won't achieve its intended outcomes regardless of how well it meets management criteria.

With this foundation established, tool evaluation can begin. But the evaluation process itself needs structure. Vendor demos are useful for understanding capabilities, but they're insufficient for predicting success. Supplement demos with realistic testing scenarios.

Realistic testing means using your actual data, or data that closely resembles it, rather than vendor-provided sample data. Sample data is clean, consistent, and structured to make the tool look good. Your data probably isn't. Testing with realistic data reveals how the tool handles the messiness of real-world information.

Realistic testing means involving actual end users, not just managers or IT staff. End users interact with the tool differently. They have different priorities. They encounter different problems. A tool that seems intuitive to someone who's seen multiple demos might be confusing to someone using it for the first time.

Realistic testing means simulating exception cases, not just happy paths. Demos show how things work when everything goes right. Real work involves things going wrong. How does the tool handle incomplete data? What happens when an integration fails? How do you recover from mistakes? These scenarios reveal whether the tool degrades gracefully under stress or becomes unusable.

One evaluation I participated in included a scenario where we deliberately introduced data quality problems—missing fields, inconsistent formats, duplicate records—to see how the tool handled them. Two of the three tools being evaluated became nearly unusable. The third handled the problems smoothly, with clear error messages and straightforward correction workflows. That difference wouldn't have been visible in a standard demo.

Evaluation should also include implementation planning, not as a separate phase after selection, but as part of the selection process itself. Ask vendors to outline what implementation would actually involve. How long will it take? What resources will it require? What are the common problems? What support will be available?

Vendors vary significantly in how they approach implementation. Some provide extensive support and structured processes. Others expect you to figure it out largely on your own. Neither approach is inherently better, but they suit different organizational contexts. An organization with strong technical resources might prefer a hands-off vendor that gives them flexibility. An organization without those resources might need a vendor that provides more guidance.

Implementation planning should also include realistic timelines. Vendor estimates for implementation time are often optimistic. They assume dedicated resources, quick decision-making, and minimal unexpected complications. Real implementations rarely match those assumptions. Build buffer time into your planning. If the vendor says implementation takes three months, plan for four or five. If that timeline doesn't work, you need a different tool or a different approach.

Throughout evaluation, document decisions and reasoning. This documentation serves multiple purposes. It creates accountability for the selection process. It helps onboard people who join the project later. It provides a reference when questions arise about why certain tools were rejected. And it creates institutional knowledge that improves future selection processes.

Documentation doesn't need to be elaborate. A simple log of what was evaluated, what criteria were applied, what concerns were raised, and how they were resolved is sufficient. The goal is to make the reasoning transparent and recoverable, not to create comprehensive reports that nobody reads.

After selection but before full implementation, run a pilot. Pilots reveal problems that testing misses because they involve sustained use over time rather than brief evaluation sessions. Pilots show how the tool fits into daily workflows, how it handles volume, how it performs under realistic conditions.

Pilots should be large enough to be meaningful but small enough to be manageable. A pilot with three users might not reveal problems that only emerge at scale. A pilot with fifty users might be too large to course-correct if problems arise. Ten to twenty users, representing different roles and use cases, typically provides good coverage without excessive risk.

Pilots should have defined success criteria and explicit decision points. What needs to be true for the pilot to be considered successful? What problems would be serious enough to reconsider the selection? When will those assessments happen? Without this structure, pilots tend to drift, continuing indefinitely without clear resolution.

One organization I worked with ran a three-month pilot with fifteen users across three departments. At the end of each month, they assessed whether the tool was meeting success criteria and whether any blocking issues had emerged. The first month revealed several workflow mismatches that required configuration changes. The second month showed improvement but identified training gaps. The third month confirmed that the tool was working as intended. Without those structured check-ins, the problems from month one might have persisted indefinitely, or the tool might have been abandoned prematurely before the improvements from month two took effect.

This evaluation framework requires more time and effort than checking feature lists and watching demos. It involves work that feels tedious—mapping workflows, documenting constraints, running realistic tests. But that work consistently predicts implementation success more accurately than faster approaches.

The framework also requires involving more people. End users need to participate in workflow mapping and realistic testing. IT teams need to assess technical constraints and integration requirements. Managers need to define success criteria and approve pilot plans. This involvement takes time and coordination. It also builds buy-in and surfaces concerns early when they're easier to address.

The framework isn't a guarantee. Tools can still fail even with thorough evaluation. But the failures tend to be smaller and more recoverable. Problems get identified during pilots rather than after full deployment. Mismatches between tool and context get caught during realistic testing rather than discovered months into implementation. The evaluation process itself builds understanding that helps with implementation even when unexpected problems arise.

For organizations currently evaluating tools, the framework provides a structure for moving beyond vendor demos and feature checklists. It doesn't eliminate judgment or guarantee perfect decisions. But it shifts evaluation from asking "what can this tool do?" to asking "how will this tool work in our specific context?" That shift in question leads to better predictions about implementation success.

The tools that work aren't necessarily the ones with the most impressive demos or the longest feature lists. They're the ones that fit your workflows, respect your constraints, meet your success criteria, and can be implemented with your available resources. Finding those tools requires systematic evaluation that goes beyond surface-level assessment. It requires work. But it's work that pays off in implementations that actually succeed rather than becoming expensive lessons in the gap between capability and usability.